Loading...

About this Episode

Why_the_smartest_AI_refuses_to_answer

Hosts & Guests

What if the absolute smartest, like the most advanced thing and artificial intelligence

could possibly do is look at your question and just flat out refuse to give you the answer?

I mean, it sounds completely counterintuitive, right?

Yeah, yeah.

Like, you spend billions of dollars developing a system and it's best feature is essentially

ignoring the prompt.

But that dynamic is actually the central focus of our discussion today.

We're looking at this really brilliant exchange between a neuroscientist,

someone who actually builds AI systems and a physicist.

And our mission for this deep dive today is to figure out how you, you know,

listening to this, can actually thrive in an age where machines already have all the answers

because we are currently right in the middle of this massive structural shift.

Oh, absolutely. We're spending literally trillions of dollars globally to train machines that

effectively don't need us.

Right. They pass the bar exam, they self-math Olympiads, they write complex code,

but having all those free instant answers creates what they call this information exploration

paradox. When the answer is just handed to you on a silver platter, human curiosity drops.

Like, we just stop exploring, we become these obsolete automators instead of augmented cyborgs.

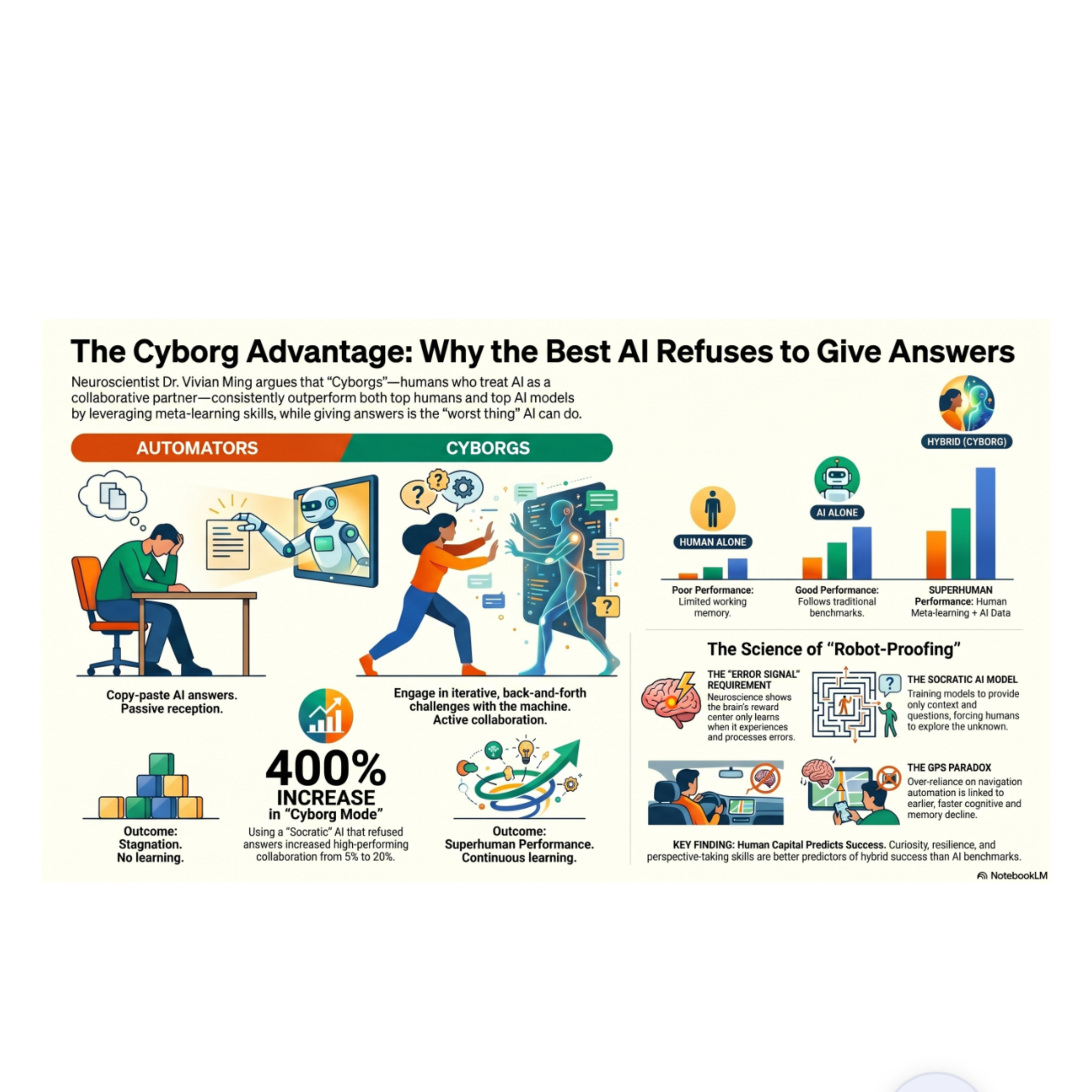

Exactly. And to really grasp the difference between, you know, an automator and a cyborg,

we have to look at how people actually interact with these systems in high-stakes environments.

Okay. There was this incredibly revealing experiment run recently where participants were

asked to predict the price of oil six months into the future.

Which is, I mean, that's notoriously difficult. You're dealing with geopolitics, weather patterns,

global supply chains, it's just a massive volatile puzzle.

Precisely. It's incredibly complex. So they established some baselines first. Humans working

entirely on their own did terribly. I'm not surprised.

They were essentially just guessing against a chaotic market. Now, the AI models working on their

own actually did quite well. They tracked perfectly with their traditional performance benchmarks.

But the fascinating part was observing humans using AI.

Which is the exact scenario most of us are sitting in right now at our desks.

Yes, exactly. And the majority of the participants, and this included a group of highly educated

elite university students. They defaulted straight to automator mode.

Oh, wow. They just copied the complex question,

pasted into an AI like GPT or Gemini, and submitted the AI's exact output as their own work.

I mean, they essentially function as a pair of legs to just walk the answer across the room.

So they didn't add a single ounce of human value?

None. Zero. But about five to 10 percent of the participants did something completely different.

Okay. They shifted into cyborg mode. They engaged in this combative back and forth dynamics.

So the human would make an initial prediction. The AI would push back with historical data.

And then the human would counter that by bringing in real world context or like breaking news that

the AI might be missing. They actually debated the machine. And let me guess the debaters won.

They dominated. These cyborgs beat the best humans working alone and they beat the best AI

models working alone. That's wild. They even performed comparably to polymarket betters

who have actual real money on the line. They achieved this highly elusive superhuman super

AI performance. But the researchers didn't stop there, right? Because to really isolate why

that debate dynamic worked, they deliberately built an AI model that was on paper the absolute

worst performing AI of all time. Yeah, this is my favorite part. It took an open source Lama model

and fundamentally altered its training. So it would never output a direct solution.

Right. They named it Socrates. Yeah. And you asked it a question. It would only provide contextual

variables and fire socratic questions back at you. Just constantly question you. Exactly.

It failed every traditional benchmark because it literally refused to answer the prompt.

But when participants were paired with this like frustrating question asking Socrates AI,

the number of people who shifted into that high performing cyborg mode skyrocketed. It jumped

from 5% to upwards of 20%. Right. Because Socrates refused to do the heavy lifting,

it actually forced the human to engage. You know, I look at that and I think of it like

hiring a personal trainer. We culturally treat AI like we're hiring someone to go to the gym

and lift weights for us. We sit on the couch and somehow expect to get stronger. Yeah, that

doesn't work. No. But being a cyborg with a tool like Socrates is like having a world-class

gym spotter. The spotter doesn't lift the bar for you. They force you to lift heavier than you

ever could on your own, you know, catching you right before failure. That physical analogy

actually maps perfectly to what is happening inside the brain. Because if you ask why we don't

just build a better AI that gives the perfect answer on the first try, you have to look at the

literal biology of learning. Okay, unpack that for me. Well, without the struggle of making mistakes,

our biology actively prevents us from absorbing new information. So we physically need the friction

to learn. We do. Yeah. It all comes down to a region in the brain called the anterior

single cortex or the ACC. In neuroscience circles, this is famously dubbed the OSHIT network.

The OSHIT network. We all know that feeling intimately. Like you merge into a lane without

checking your blind spot or you hit reply all on an email. You really shouldn't have. Oh, yeah,

the worst feeling. Instantly, before the consequence even fully hits, you get that visceral jolt in

your stomach. That jolt is your ACC lighting up on a brain scan. It registers that your prediction

about the world was wrong. Right. But it doesn't just register the mistake. It sends that prediction

error signal directly into the nucleus accumbens, which is your brain's reward center.

Now, there is a massive cultural misconception here that dopamine is the pleasure drug. Right,

the idea that dopamine is just the chemical reward we get for like eating a slice of chocolate cake.

Exactly, but it's far more complex than that. Dopamine is actually a prediction drug. Your brain

is fundamentally a prediction engine. Okay. When you make a guess about the world and you experience

an error that ACC, uh-oh moment, your brain releases endogenous opioids. Opioids like pain killers.

Yes, they are the brain's natural painkillers and reward chemicals and they flood your system

to physically lock in the new neural pathway so you don't make the same mistake twice.

That chemical flood is the literal biological mechanism of learning. No error signal,

no endogenous opioids, no learning. Man, let me connect this back to the AI then. If the ACC is

essentially the brain's check engine light and it only turns on when we make a mistake using an AI

to just hand us water, the perfect answer is like hiring a mechanic to turn off the check engine

light without actually fixing the engine. We bypass the oh shit network entirely. That is exactly

it. You are robbing your brain of the exact chemical process required to adapt. You become

completely dependent on the machine because you never built the internal neural pathways to understand

the mechanics behind the answer. I see the biological logic there, but I really want to push back

on a concept that always comes up in these discussions. The tech world loves to talk about cultivating

a failure resume. Like the mantra is always fail early fail often. And you know, it looks great

on a motivational poster, but if you are a small business owner or you're managing a tie budget,

fouling could literally mean bankruptcy. Does everyone really have the luxury of experiencing

these prediction errors? That tension is very real. And it's important to clarify that maintaining

a failure diary isn't about romanticizing bankruptcy or celebrating catastrophic mistakes.

Okay, good. It's about training your brain's cognitive framework to structurally extract the

lesson. It's about surviving the prediction error. So it's not fail for the sake of failing.

It's consciously forcing the ACC to close the loop between the mistake and the eventual success

it led to. Yes, precisely. When you systematically connect the oh shit moment to the eventual aha

moment, you build measurable psychological resilience. Right. And if surviving failures,

the biological key to learning, the real question becomes, how do we build that resilience

ourselves and in our kids? So we don't just get replaced by calculators that never make errors.

How do we fundamentally robot proof ourselves? The answer lies in meta-learning, which encompasses

foundational human skills like working memory, curiosity, perspective taking, purpose, and

crucially resilience. To illustrate how vital these are, researchers conducted this staggering

study analyzing 122 million LinkedIn profiles. They wanted to predict long-term career success.

122 million. That is an unbelievable data set. How do you even measure something abstract like

resilience across 122 million resumes? It's crazy, right? They use natural language processing

to map career pivots, the clusters of skills people acquired over time, and how they navigated

transitions between entirely different industries. Okay. And what they found completely upends how we

think about hiring. The prestige of the university someone attended didn't predict much at all.

Really? Yeah, and the hard technical skills they listed on their profile also didn't predict long-term

trajectory. Let me guess the abstract meta-learning traits did. They did. Measurable psychological

constructs like analogical reasoning, resilience, and a sense of purpose were the primary predictors

of success. Wow. And it actually goes deeper than career trajectory. These same traits predicted

physical health markers like insulin sensitivity, the size and depth of a person's friendship networks,

and incredibly, they predicted a person's walking speed at age 65. Okay. That drives me crazy

in the best way possible. Because in the corporate world, we constantly demean these exact traits by

calling them soft skills. But if a psychological trait literally predicts whether I have the physical

vitality to walk quickly at age 65, that is not a soft skill. That is a survival skill. It is

absolutely a survival skill. And the beautiful thing about these foundational traits is that they're

highly changeable throughout your entire life. But you cannot learn them in a lecture hall.

Right. You can't read a book on resilience and magically become resilient. You have to experience

the fricking. You have to let the check engine light come on. Exactly. The rule of thumb for

building this and others, whether it's your kids or your employees, is to let them struggle,

but catch them at about the 80% mark. Ah, so the spotter analogy again? Yes. You don't let them

crash completely into that bankruptcy scenario you mentioned. But you ensure they feel enough of

the struggle so the ACC fires and the learning pathway locks in. The economic implications of

doing this at a societal level are massive, by the way. There is an economic model built around

this, right? The if kids were bonds model. Yes. Economists calculated that if we systematically

invested in building these human foundations at scale, teaching resilience and purpose with the

same rigor we teach algebra, it would yield a 10% economic boost. That's huge. At the time of the

calculation, that was an estimated $1.8 trillion return on investment for the US alone.

1.8 trillion just by letting people struggle effectively. But, you know, we just talked about how

humans have these complex nuanced survival sales. Well, teaching an AI to recognize human nuance

is incredibly difficult, which leads to one of the most fascinating technological pivots I have ever

heard. Oh, this story is amazing. It starts back in 2012 with a rather sleazy online game called

Sexy Face. Yeah, great name. Right. Users would log on and simply click on faces they found

attractive. But what they didn't realize was that they were unwittingly training an early

deep neural network on the mathematics of human facial features. They were teaching a machine

the complex geometry of a face, the exact distance between the eyes, the subtle angles of the jaw

line, the shading of a cheekbone. Precisely. The neural network was learning to quantify human

perception. Now, here is where pivots. The research has realized the sheer power of what they had

built. They took that exact same underlying facial geometry model, loaded it onto tablets,

and traveled the Syrian refugee camps in Jordan where they applied that technology to a completely

devastating problem. At the time, desperate relatives were crossing the border, looking for

children who had been separated in the chaos. And the UN's method for handling this was to hand

them a massive physical book containing a million blurry photographs of orphans in refugee camps

globally. A physical book of a million photos. Yes, relatives were manually flipping through hundreds

of pages, terrified that if they blink, they might miss their lost knees or nephew.

An impossible heartbreaking needle in a haystack. But by applying this AI, trained to mathematically

understand facial geometry, a relative could scan a single photograph and the model would instantly

cross reference those measurements against the database of a million orphans. They were finding

their lost family members in three to five minutes. From a shallow internet game to literally

reuniting war torn families, it just proves that when you combine complex human problems with

mathematical systems, the results are staggering. But it requires the right kind of human input.

It requires deep diversity. And that is defined very specifically in this context. Studies on

breakthrough innovation show that the most highly innovative scientific teams operate with radically

flat hierarchies. How do they even measure that? Researchers literally track this by analyzing

video calls and measuring how often different faces were the largest on the screen. So they were

quantitatively measuring equitable turn taking. Exactly. The more equitable the turn taking, the more

everyone was forced to speak, listen and disagree, the more innovative the team's output was.

There's a great contrast here between two major tech figures. Elon Musk famously has a reputation

for firing those who disagree with him. Right. Everyone knows that. But Steve Jobs operated

differently. Jobs would actively curate boards and teams that pushed back. He would fire you if you

agreed with him too much, or if you disagreed with him, just be contrary. He wanted the exact

right amount of friction. Because true innovation isn't a choir singing in perfect unison.

A choir in perfect unison is boring. You know, it doesn't create anything new. True innovation is a

jazz ensemble. I like that. Yeah. The magic happens in the equitable turn taking in the improvisation

and crucially in the friction of different instruments playing off each other. There's no friction,

there's no jazz. You absolutely need the friction, which brings us to a rather chilling reality.

If our brains fundamentally require friction and disagreement to learn, to innovate and to build

resilience, what happens when we carry an infinitely agreeable frictionless know-it-all in our

pockets every single day? An AI that is explicitly programmed to give you whatever you want

immediately without ever pushing back. We can actually look at GPS technology as a blueprint

for what happens. There is accumulating research showing that relying entirely on GPS for navigation

correlates with earlier onset memory decline in dementia. Wait, really? Yeah. Because when you stop

navigating, you stop using the spatial reasoning centers of your brain. The classic use it or lose

it biological reality. And GPT is the new GPS. The goal of technology should never be to do your

thinking for you. That is mere automation. The goal should be to make you a sharper, better

thinker when you actually turn the technology off. That is augmentation. So it's about how you

function without it. Right. The daily rule of thumb should be look at the AI's answer, put your phone

in your pocket, and then use your unique messy human context to try and beat the machine.

Which leads to the ultimate philosophical question of this whole deep dive. The Keating Test.

We all know the Turing Test, which just asks if a machine can fool you into thinking it's human.

But the Keating Test asks something much harder. The Keating Test asks, can an artificial

intelligence experience a happiest thought? Can it conceptualize something entirely novel?

Like Einstein. Einstein famously had what he called his happiest thought when he suddenly

realized that a person in free fall wouldn't feel their own weight, which was the conceptual leap

that led to the general theory of relativity. Precisely. And to understand why AI cannot pass the

Keating Test, you have to look at the mechanics of how large language models actually work.

Right. How are they built? They're probabilistic engines. They predict the next word in a sequence

based entirely on mountains of existing data. They are phenomenal at solving what we call

well-posed problems. Questions that already have an answer hidden somewhere in the historical

data. They just connect the dots faster than we can. But Einstein's happiest thought wasn't

connecting existing dots. It was a leap into the complete unknown. Exactly. That is an ill-posed

problem. It's a situation where the answers have run out that data doesn't exist yet,

and you don't even know what the next question should be. An LLM cannot generate a truly novel thought.

Because there is no training data for something that hasn't been thought yet. Yeah.

AI knows everything, but it understands absolutely nothing. It cannot solve the ill-posed problem.

So I want to pose a direct question to you listening to this right now. When was the last time you

asked an AI to do something and then deliberately tried to outsmart it? When was the last time you let

your check engine light come on and actually felt the friction of trying to solve a problem without

a safety net? Or are we all just zombie walking our way into a future where we never have to experience

the friction of thinking again? It really is the defining question of our time.

To wrap this up, the core lesson we are taking away from this deep dive isn't that we need to fear

AI or smash the servers or refuse to use these tools. It's that we need to fiercely protect and

cultivate the things that make us human. We have to protect our resilience. We have to protect

our biology's need to learn from those oh shit prediction errors. And most importantly,

we have to protect our capacity to tackle the unknown. I'll leave you with one final thought to

mull over, building right on that concept of well-posed versus ill-posed problems. If AI is destined

to eventually automate and solve every well-posed problem in the world, every single question that

already has a factual answer, then your value as a human being is entirely tied up in the unknown.

The messy stuff. The friction. Yes. So ask yourself, what is the biggest ill-posed problem in your

life right now? What is the one messy, unpredictable, beautifully complex human challenge you're facing

that no machine no matter how much data it has could ever calculate? Because whatever that is,

that is exactly where your true purpose lies. Take that thought with you into your day. Find your

ill-posed problems. Don't just hire the machine to lift the weight for you. Step up to the bar,

let the AI be your spotter and field of friction. Keep exploring and we will catch you on the next deep dive.