Loading...

About this Episode

Mathematics_without_infinity

Hosts & Guests

Imagine you're, or you're just counting one, two, three, four, right.

And you assume, you know, quite naturally that you can just keep going forever.

Like you picture this infinite road of numbers just stretching out ahead of you.

Exactly. Yeah. It feels like the ultimate safety net of reality.

Yeah. The absolute bedrock of mathematics. But I mean, what if you eventually hit a wall,

a literal wall, right? What if infinity isn't actually real? What if it's just a mathematical,

illusion, like a conceptual crutch we've been relying on for centuries, simply because we didn't

know any better. I mean, it is a deeply unsettling thought. We're so used to the idea that numbers

just keep going. We take it for granted. We do. Yeah. But the truth is, the moment mathematicians

actually try to pin infinity down like to find it rigorously, the math starts doing some very

strange contradictory things. It's messy. Very messy. It creates a genuine foundational crisis.

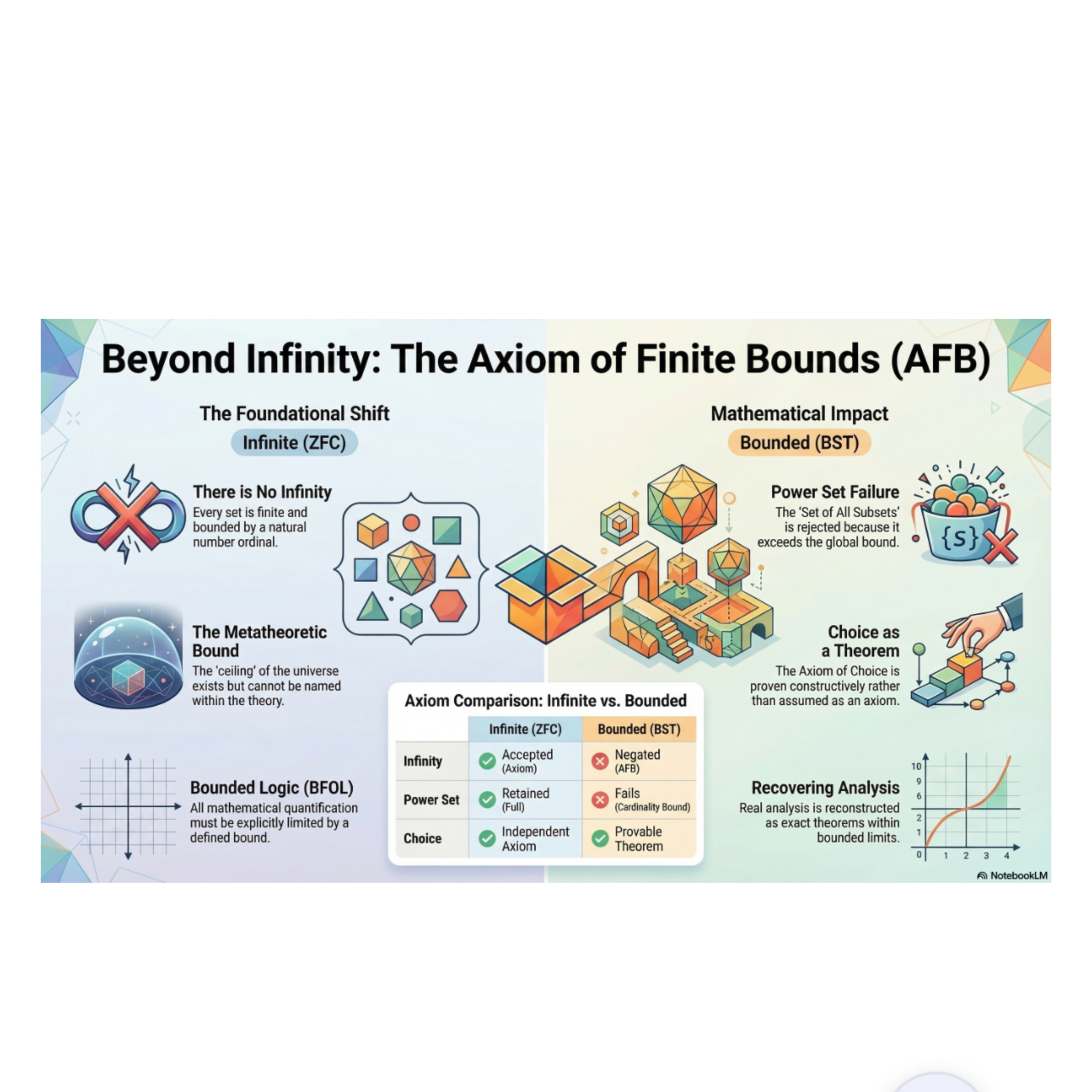

Okay. Let's unpack this because for you listening, we are looking at a working paper today that

takes that crisis head on. It really does. It's this dense, incredibly ambitious mathematical paper

titled the axiom of finite bounds. And the mission of this paper is something that frankly

sounds impossible to anyone who has, you know, taken a high school math class. It sounds completely

counterintuitive. Yeah. It is trying to rewrite the entire foundation of mathematics by completely

eliminating the concept of infinity, which is a massive undertaking. The author is constructing a

rigorous alternative foundation for finite mathematics, which they call bounded set theory.

Bounded set theory. Right. And they're attempting to build this entire mathematical universe from

just a single axiom. We really need to establish the stakes here for you because we aren't just

talking about a fun philosophical debate over a cup of coffee. No, this is practical. Exactly.

This paper meticulously reconstructs the core machinery of calculus, geometry, and complexity theory

without ever using an infinite set. So if this paper's logic holds up, it means the entire

architecture of modern science like the math that builds the bridges you drive on and, you know,

the algorithms that run the smartphone in your pocket doesn't actually need infinity to function.

Precisely. It challenges the fundamental assumption that infinity is a necessary ingredient for complex

mathematics. Wow. But to really understand why this paper is so revolutionary and how it manages

to, you know, pull this off, we first have to ask a crucial question, which is why did previous

attempts fail? Right. Because some of the greatest minds in mathematical history tried to kill

infinity long before this paper was ever written. Oh, they absolutely did. And to understand the

mechanics of this new theory, we really have to look at that historical graveyard of finitism.

The graveyard. Yeah. The most famous early attempt was by Leopold Kronacker back in the 1880s.

He famously declared, God made the integers all else is the work of man. I love that quote.

It's a great quote. He philosophically rejected any mathematical concept that couldn't be constructed

step by step from whole numbers using, you know, strictly finite operations. But Kronacker hit a

massive wall, didn't he? He couldn't make it work for the math we actually need in the real world.

Yeah, he failed completely when it came to reconstructing continuous math. Think about the real

number line or the smooth curves you see on a graph. Okay. To do calculus, you rely on concepts like

the intermediate value theorem. Let's break that down for a second for everyone. The intermediate

value theorem is basically the rule that says if you draw a continuous line from point A to point B

on a graph, your pen has to pass through every single value in between without lifting off the

paper exactly. And to guarantee that your pen passes through every value, you need a line made

of infinitely many dense points. Ah, right. If you only believe in whole numbers like Kronacker did,

your graph is in a smooth line. It's just a series of disconnected dots and you can't do calculus

on disconnected dots. You really can't because he didn't have a bounded finite substitute for that

smooth infinite line. His entire program just stalled out. So Kronacker fails. Then fast forward to

the 1920s and we get David Hilbert. Now, Hilbert didn't want to kill infinity, right? No, you

wanted to save it. Right. He wanted to prove it was completely safe to use. He tried to justify

all of infinite math using only finite combinatorial logic. Yes. He wanted an iron clad, finite proof

that infinity wouldn't break mathematics. And Hilbert gets stopped dead in his tracks by Kurt Godel.

The famous Godel. Yeah. Godel comes along with his incompleteness theorems and just shatters Hilbert's

dream. You really did. I always find Godel's theorem is fascinating, but I mean, they can be

incredibly dense. How exactly did he break Hilbert's finite proof? Well, think of it like the classic

Liars paradox. This statement is false. Right. If the statement is true, then it must be false. If it's

false, then it must be true. It's a loop that just breaks logic entirely. Godel brilliantly figured

out a way to translate a similar paradox into pure mathematical equations. He essentially forced

a mathematical system to say, you know, this mathematical statement cannot be proved. Wow.

So if the math can prove it, the math is contradictory and broken. Exactly. And if the math can't

prove it, then the math is incomplete. You got it. Godel proved that any consistent system strong

enough to do basic arithmetic physically cannot prove its own consistency. So you can't use finite

tools to put a perfect secure fence around infinity. No, you can't. Which leaves mathematicians

scrambling. You get people like L.E.J. Brower and his intuitionism. All right, Brower. Brower decides

math is purely a mental construction. He rejects actual completed infinity like the idea of a fully

existing infinite set. But he replaces it with potential infinity. Right. The idea of an ongoing

never-ending process, which honestly feels like a massive cheat to me. It kind of is. It's like saying,

I refuse to believe in an infinite pie. I only believe in a pie that never, ever stops baking.

You're still relying on something endless. That's a great way to put it. And that brings us to

the closest predecessor to our working paper today, Wilhelm Ackerman system known as ZF-Not-Infinity.

Yes. ZF-Not-Infinity. That translates to hereditaryly finite set theory. Hold on. Let's translate

the translation. Hereditaryly finite. In plain English, does that just mean you have sets of things

and sets inside those sets and sets inside those all the way down? But every single layer

is strictly finite. Exactly. There are absolutely no infinite collections anywhere. That is exactly

what it means. Ackerman took the standard rules of math and just negated the axiom of infinity.

He explicitly said, no infinite sets exist. Okay, but. But here's the critical flaw in his system.

Yeah. He didn't impose an upper bound. And this is where the house falls down. Right. Because Ackerman

built a mathematical room and proudly declared there is no infinite ceiling in this room. Right.

But he wrote the architectural rules so that you are perfectly allowed to keep stacking the bricks,

building the walls taller and taller forever. You never actually hit a roof.

If we connect this to the bigger picture, any theory whose domain is unbounded is inherently

committed to infinitely many distinct objects. No. Even if Ackerman stubbornly refuses to collect

all those bricks into a single completed infinite set, the infinity is still baked right into

the structure of his universe. It's an illusion of safety. Precisely. And this is exactly why all

those previous historical programs stole. They didn't actually remove the commitment to infinity.

They just relocated it. They hit it in the architecture. Exactly. So simply putting a knot

in front of the word infinity didn't work. The walls just kept growing. The author of our source

paper realized that to actually banish infinity, you couldn't just change the rules of the sets.

No. You had to restrict the very grammar, the actual foundational language of mathematical logic

itself, which brings us to bounded first-order logic or BFOL. Right. So in standard mathematical

logic, you are allowed to make sweeping universal statements. You can say for all X, meaning literally

every possible number or object in the mathematical domain. Just a blanket statement.

Yeah. But in BFOL, that kind of unrestricted grammar is completely illegal. You cannot just

gesture vaguely at the horizon and say for all X. So what are you saying instead?

The logic forces you to say, for all X less than or equal to T, you must specify the limit.

Okay. I have to stop you here because I don't buy this. If we just artificially say we aren't

allowed to talk about all numbers, aren't we just blinding ourselves on purpose? It feels that way.

Yeah. It feels like tying our hands behind our backs. How can we trust that this math

as a valid foundation if we don't even know where the ultimate ceiling is? It's a very natural

objection. But you have to understand why the theory forbids you from naming that absolute

ceiling. Okay. Why? Let's look at the axiom of finite bounds itself, specifically formulation B

in the paper. It states that every model of bounded set theory is perfectly finite. There is an

absolute maximum. Okay. But as a strict rule, the theory cannot name or interact with its own

ceiling. It is a meta-theoretic constraint. But why hide the ceiling? Because if the theory

could see its own ceiling, it would instantly trigger a catastrophic logical crash known as

the Barale 40 paradox. Barale 40 paradox. Yes. Let me walk you through the mechanics of it. Imagine

the absolute maximum bound of our universe was an object, a set that existed inside the theory.

Let's call this maximum set omega. Okay. I have omega. The biggest possible thing. Right.

Now, because omega is a set inside your mathematical theory, the standard rules of your theory

apply to it. Sure. And one of the most basic rules of set theory is that you can take any set

and combine it with itself to make a new slightly larger set. You take a set A and you add set A

to it. Okay. So you take your maximum set omega and the rules tell you that you can do a major

union omega. Oh, I see. You just added one to the absolute maximum. You just built something

bigger than the biggest possible thing. Exactly. You create a logical contradiction that

destroys the entire system. Wow. The maximum bound cannot be an object inside the domain

because the internal rules of the domain will always allow you to build something bigger.

That makes total sense now. It feels exactly like being dropped into a massive video game universe.

Oh, that's a good way to look at it. Yeah. Like, you know, logically that there has to be an

edge to the map because it's a piece of software running on finite memory. Right. But the game's code

explicitly prevents your character from ever walking to those exact coordinates. Because if you

interacted with the edge, the physics engine would break and the game would crash to the desktop.

That is a brilliant analogy. The bound is real. But to keep the game running flawlessly,

it has to be outside the system's ability to manipulate it. Right. By making the bound a

meta theoretic constraint like hiding the edge of the map from the character, the Broly 40 paradox

completely vanishes. So we've successfully hidden the ceiling to prevent the universe from crashing.

But I mean, if we fundamentally change the rules of how big things can get,

doesn't that break the rest of the house? It definitely shakes things up. Yeah.

What happens to the math we actually use every day? Standard mathematics is built on a very specific

set of foundational rules, right? Yes. Standard math runs on ZFCs or mellow Frankl set theory with

the axiom of choice. But when you enforce this new bounded first order logic, ZFC goes through a

massive structural transformation. So certain classic rules simply cannot survive in a finite

universe. And the most dramatic casualty by far is the power set axiom. Let's make sure we are

clear on what the power set axiom actually does. In simple terms, a power set is just a set of

all possible combinations like all the subsets you can make from an original set. If I have a set

with an apple in a banana, the power set includes a set with just the apple, a set with just the

banana, a set with both and a set with neither. Correct. In classic infinite math, you can always take

the power set of anything. But in bounded set theory, this axiom completely fails. Completely.

Completely. And the mechanism behind why it fails is fascinating. It all comes down to cardinality.

The sheer number of items involved. Power sets grow exponentially. Specifically, they grow at a

rate of two to the power of n. Right. So if I have three items, the power set has two to the third

or eight combinations. If I have 10 items, it's two to the tenth, which is what,

1,024 combinations. Exactly. Now, imagine our bounded mathematical

universe has a global maximum capacity. Let's say just for example, the absolute limit of the

universe is a billion objects. Okay. A billion. The paper shows that it takes a surprisingly

tiny set to completely shatter that limit. If you have a set with just 30 elements,

its power set is two to the 30th. And two to the power of 30 is over a billion. Yes. So in

a universe captured a billion, the power set of a measly 30 element set physically cannot exist.

It would require more objects than the entire universe contains. That's wild. The power set axiom

is rejected because it acts as an engine of rapid exponential explosion. It is mathematically

incompatible with a global bound. Here's where it gets really interesting for me. Because you

assume that rejecting a foundational axiom like the power set would just destroy math entirely.

You would think so. But it actually seems to clean it up. Let's look at the axiom of choice.

In classic math, you need this heavy, complex axiom just to prove that you can blindly pick

one item out of an infinite number of buckets. But if you live in a bounded universe,

you don't have an infinite number of buckets anymore. Exactly. So we don't need the axiom at all.

It downgrades from a foundational assumption into just a simple, provable theorem.

Because the buckets are finite, you literally just write a finite list and pick an item from it.

It's common sense. And we see the exact same story with the axiom of foundation.

In classical ZFC, foundation is an axiom required specifically to prevent pathological nightmares

like infinite descending membership chains. Explain what an infinite descending chain is.

Imagine a box that contains a smaller box, which contains a smaller box,

which contains a smaller box going on endlessly forever. Like nesting dolls, but endless.

Right. Classic math needs a special rule to outlaw that otherwise the logic gets tangled.

But in our new, strictly finite universe, an infinite descending chain is physically impossible.

Right. Because you would eventually run out of boxes.

Precisely. It must hit a bottom. So the axiom of foundation is no longer something you have to

assume. It becomes an automatic structural theorem of the finite universe.

This is amazing. No tower set means no exponentially exploding infinities.

No infinite axiom of choice means no Bannock-Parsky paradox.

Ah, the Bannock-Tarsky. That is perhaps the most famous and most bizarre result in all of infinite

mathematics. For those who haven't encountered it, the Bannock-Tarsky paradox is a mathematically

proven theorem in classic ZFC, where you can take a solid 3D sphere, cut it up into a few

scattered fractal-like pieces, and then just rotate and reassemble those exact same pieces

into two identical solid spheres. At the mind-bending. And both new spheres are the exact same

size as the original. It completely violates all laws of physical reality and conservation of mass.

And the only reason the math allows you to duplicate a sphere like that is because the pieces you cut

it into rely on non-measurable infinite sets of points. Right. When you manipulate those infinite

points, volume loses all meaning. But the moment you cap the universe and say,

no sets must be finite, Bannock-Tarsky is dead. You can't magically duplicate a sphere anymore.

It genuinely feels like this paper is applying a massive software bug fix to the fabric of reality.

It really does. Because removing these axioms doesn't destroy the math we actually use to build

things. It simply forces explicit, rigorous thresholds. Thresholds? Yeah, the power set

operation still exists for small sets. If you need all the combinations of a five-element set,

the theory lets you build it perfectly fine. The math just now precisely calculates the threshold

where that operation breaks down. It demands quantitative accountability.

Okay, so we've hit in the ceiling, we've stopped the exploding infinities, and we've bug fixed

the geometric paradoxes. But if mathematicians have lost these classic tools, how do they actually

prove things moving forward? That's the big question. They've lost their traditional engine for logic.

What replaces it? So the traditional engine you are referring to is classical piano induction.

This is the domino effect of mathematical proofs. The way it works is, you prove a property holds

true for the number zero. Then you prove that if it holds true for any number n, it must also hold

true for the next number, n plus one. You tip the first domino, and it knocks over the next,

and the next. In classical math, you let that run forever, proving the property for all natural

numbers to infinity. But as we've established, Pino induction completely fails in bounded set theory.

Because if you let the dominoes fall forever, they will inevitably slam into the hidden ceiling.

Right. You can't assume an infinite unbounded domain. So the paper introduces two new engines to

replace it. The first is B-I-B-S-T, which stands for bounded induction for bounded set theory.

How does that work? It's essentially the domino effect, but with a strict safety protocol.

You explicitly state we are only knocking down dominoes up to this specific finite bound T.

Okay, so it stops. Yes, it goes up step by step, n to n plus one, but it stops before it hits

the ceiling. It's very useful for foundational set theory. But there is a second engine introduced,

which is arguably much more profound, plus is P-I-N-D, from a system known as S-1-2. P-I-N-D,

polynomial induction. I love this one. Walk us through how P-I-D works because it doesn't go

up by ones like the dominoes, right? Right. Instead of proving things by going up linearly by one,

P-I-N-D proves things by bit doubling. You prove something works for half of the number X,

and use that to prove it works for the full number X. It processes numbers by looking at their

binary links one bit at a time. Why does doubling matter so much? Because of efficiency. If you go

up by doubling, it only takes a logarithmic number of steps to reach a massive number,

instead of a grueling linear number of steps. Right. If you want to reach a million by counting by

ones, it takes a million steps. If you reach it by doubling, it takes about 20 steps. That's a

huge difference. In the realm of computer science, this bit induction perfectly characterizes

polynomial-time computable functions. Meaning, the kind of efficient algorithms that modern

computers can actually run before the universe dies of heat death. Exactly. P-I-N-D is the exact

logical equivalent of efficient real-world computation. By using this as the engine of proof,

the paper ties the very foundation of mathematical logic directly to what physical computers

can actually calculate. That is deeply satisfying. It roots math and physical reality,

but the paper is also incredibly honest about the fact that this rigorous bounded approach

comes with a cost. Yes, there are trade-off. There are things we lose. They call it the

category D-gap. Because our induction is bounded, there are some classic sweeping theorems that we

just can't prove universally anymore. Things like Goodstein's theorem or proving the totality

of the Ackerman function. Yes, the Ackerman function is a classic example. It is a mathematical

function that grows so incredibly fast. It makes exponential growth look like it's standing still.

Wow. In standard infinite math, you can boldly claim the Ackerman function is total. It will

eventually compute a final answer for literally any input you give it no matter how large.

But in bounded math, you can't prove that universal claim. No, you can't. Because for any decently

large input, the computation of the Ackerman function would require more steps and more numbers

than your finite universe can hold. The computation would smash through the ceiling.

I can see traditional mathematicians arguing that we are losing important math by having this

category D-gap. It feels like a downgrade. But let me try an analogy here. Go for it.

In classic math, we are trying to theoretically prove that a car can drive forever.

But in the physical world that is impossible, it will eventually break down or the tires will

blow out or it will run out of gas. Right. In bounded math, we aren't proving the car drives forever.

We are just proving that it can drive to any specific finite destination that we actually

have enough gas to reach. We can still prove every specific finite instance of Goodstein's theorem

or the Ackerman function. We just can't make the arrogant, physically impossible claim about

forever. I completely agree with your analogy. It is a matter of epistemic honesty. A foundational

system that refuses to assert magical, infinite properties for objects that it doesn't actually

need is a stronger, more honest foundation. Yeah. The category D-gap is real. But it is incredibly

narrow. And importantly, it exists entirely in a realm that is far beyond the limits of human

or computer calculation anyway. So what does this all mean? Let's bring this directly back to you

listening to this right now. Think about the physical world you interact with. The calculus that

ensures the bridges you drive on don't collapse, the geometry that designs the aerodynamics of airplanes,

the computer science algorithms, routing data to your smartphone. None of that actually needs the

concept of infinity to function. None of it. This deep dive shows that you can have a completely

rigorous self-contained paradox-free mathematical system based entirely on finite bounds.

It perfectly fits that desire to have a thorough, grounded understanding of how things work

without the bloated paradoxical baggage of infinite information. It is practical, it is precise

and above all, it works. And taking this entirely finite approach leaves us with a rather profound

implication about reality itself. Oh, how so? Think about modern physics. In the real world,

the observable universe isn't infinite. It actually has a holographic bound. Physicists estimate

that the entire observable universe contains around 10 to the power of 185 Planck scale cells.

Which is a massive number, but it is a strictly finite bounded number. Exactly. So what if

infinity never actually existed in nature? What if infinity only ever entered physics and mathematics

as a piece of calculations scaffolding? Just a temporary tool we built to hold the structure

up while we figured out the hard math? Yes. And when you finally pull that scaffolding away,

you don't find an infinite continuous void. You might find that the universe operates exactly

like bounded set theory. Wow, a perfectly finite, rigorously computable reality, where infinity

was never anything more than a myth we invented to make the equations a little easier to write down.

So the next time you find yourself counting and you assume the bedrock of reality just goes on

forever. Remember the edge of the map. That's a thought for you to ponder long after the steep

dive ends.