Loading...

One Thing: The Pentagon vs. AI Power Players: Did Anyone Win?

About this Episode

Welcome back to one thing, I'm David Rind, and a messy fight over AI red lines in the military gets existential.

Are we just saying that you're allowed to be a company that has political ideas that are different from the people in power?

Stick around.

This is from a video posted to the White House X Account the other day.

It makes a war footage, some of it very real, American missile strikes on Iran, planes taking off, but some of it is also apparently from the video game Call of Duty.

Not everyone loved this mashup.

Critics said, real people are dying in this war, multiple US surface members have been killed. This is not a video game, it's no joke.

The outrage didn't seem to bother the White House though, Com's director Steven Chung wrote in streamer slang, W's in the chat boys.

But it's worth asking, if that's how the administration is selling the war on social media, how seriously are they taking negotiations with potential defense contractors?

So to start, AI companies have been working with the military for several years now.

This is Hadass Gold, she's CNN's AI correspondent, and I wanted her to catch me up on a major story that's been playing out over the past few weeks.

It involves the defense department and some of the biggest AI companies in Silicon Valley, and it could have major implications for the future of American war fighting.

Because like Hadass said, this AI technology is already being used by the Pentagon.

It reportedly played a role in both the January operation in Venezuela as first reported by the Wall Street Journal, and the weak old war with Iran.

So when it comes to which company the military works with, and how this tech gets used, the stakes are sky high.

Anthropic, which has the clawed AI system, was until recently really the only AI model that could be used on the military's classified systems, which makes it special.

But the Pentagon a while ago wanted the Anthropic to change some of the policies in their contract to allow them to use their system for quote all lawful uses.

Anthropic was mostly okay with that, but had two major red lines. They were concerned about two things.

One was AI being used in autonomous weapons, so weapons that are controlled by AI without necessarily a human in the loop.

And AI being used in the mass surveillance of US citizens, and Anthropics point of view on this is that on the autonomous weapons, they say AI just is not reliable enough yet to be used in these systems.

And on the second, they believe that the laws and regulations of the United States have not really caught up to where we are on the advances in the technology.

And they just believe that they need to be updated before you can really use AI and mass surveillance.

The Pentagon's point of view on this was we need to be able to use the tools that we contract you with for any reason.

We can't go to you in the middle of a war and say, hey, we need to be able to do XYZ. Can you give us permission?

Are you cool with that?

Yeah, yeah, they're saying like we don't we don't ask Boeing for permission on how to use its planes.

We're not going to do the same with an AI model.

Adas says the Pentagon took a hard stance here. They basically said if you don't agree to our terms, not only will we cancel your contract, we'll demue a supply chain risk, basically banning any government entity from using the product.

That kind of blacklist thing is normally reserved for adversaries like Russia or China. It isn't usually slapped on American companies.

The showdown between the US Pentagon and anthropic is bowling towards its deadline without a deal inside now.

So all of this came to a head on Friday, February 27th. This was the day when at 5.01 pm the Pentagon said if anthropic didn't agree, then they were going to do the supply chain risk designation and cancelled their contract.

We already had known beforehand that anthropic was not going to agree. They had said they could not in good conscience. A C to the Pentagon's request.

The President blasted anthropic in this long post today saying quote, we don't need it, we don't want it, and we will not do business with them again.

And then President Donald Trump actually surprised everyone when he posted on true social that the goal of the government was now going to have to stop using anthropic.

And then he was giving them a six month window to remove their AI model from all of their systems calling them a woke company.

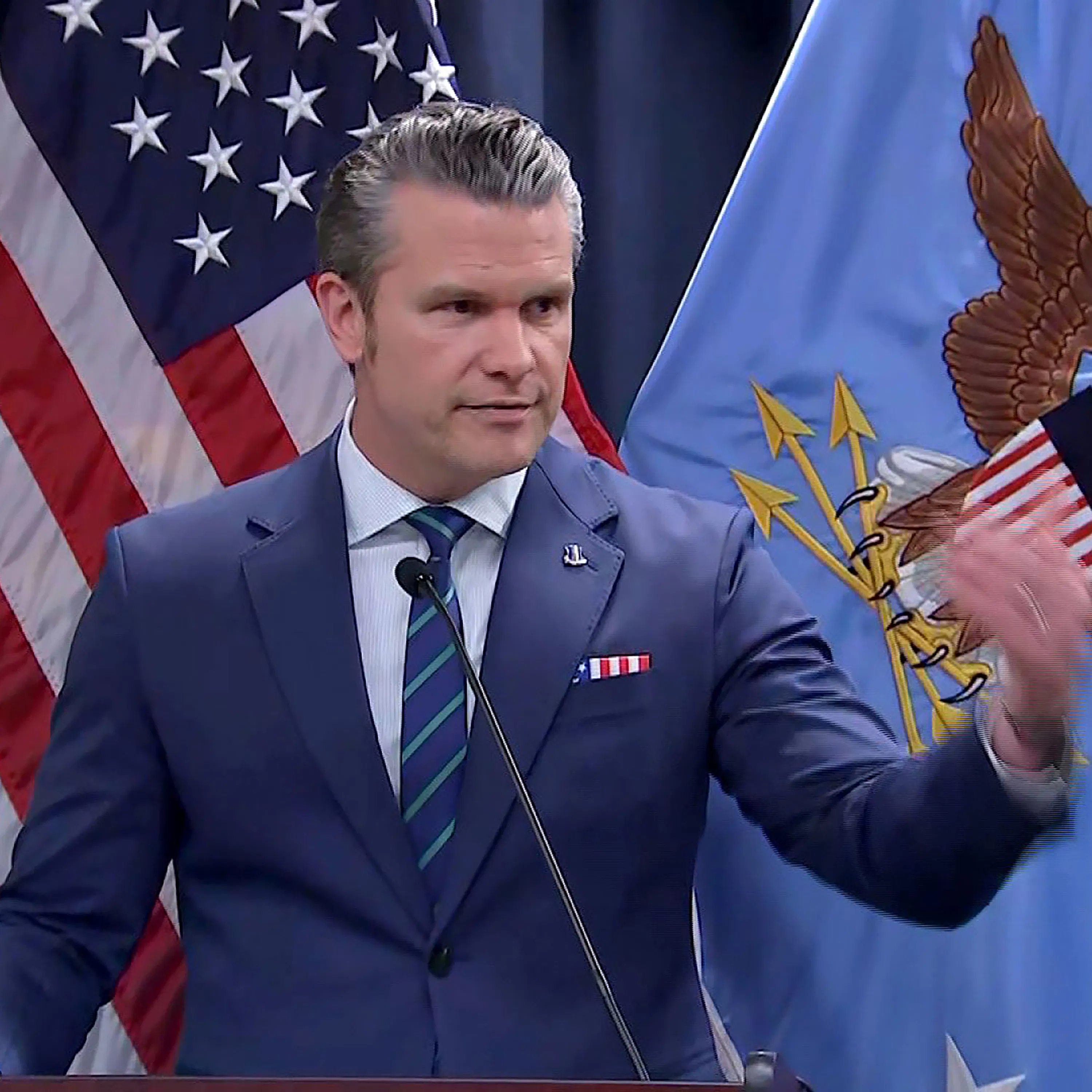

But even so, there was still a possibility that things could work out. But then at 5, shortly after 5 pm, the Defense Secretary Pete Hexeth posted that anthropic would be designated a supply chain risk meaning that all military contractors and his telling would have to stop using anthropic if they work for the military.

Not just in their military work, he claimed in any commercial work, there's some legal debate over that.

Moments before we just came on the air tonight, anthropic responded to what you just heard from the Pentagon saying quote, no amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons.

We will challenge any supply chain risk designation in court.

I don't personally think the Pentagon should be threatening DPA against these companies.

Another surprising thing of all of this was the unity we saw, at least initially, from the tech community.

Open AI, which is Chad Gbt's maker, their CEO Sam Altman came out on Friday morning and said they actually have the same red lines as anthropic when it comes to any contract with the Pentagon for them to be using Chad Gbt in their classified systems.

For all the differences I have with anthropic, I mostly trust them as a company and I think they really do care about safety and I've been happy that they've been supporting our war fighters.

I'm not sure where this is going to go.

But then a few hours later, late Friday, Open AI actually announces that they had signed on to a contract with the Pentagon seemingly swooping in and taking anthropics place.

And they claimed that their red lines, which they said was the same, were upheld in this contract.

And there was kind of a huge mess and legal questions that follow.

Well, yeah, did we even know that they were like competing for this contract and where did how did this all happen?

So all of the major AI companies have been working with the military on on classified systems.

And it had been known that they had all been working to try to get on to classified systems.

I should note, XAI Elon Musk's company had agreed to this before and said, yeah, you can use it for whatever the heck you want.

We don't have red lines.

But Open AI said that they had found a way that they believed that those red lines on autonomous weapons and mass surveillance were being upheld.

But this caused a huge uproar.

Yeah, I mean, how does the people working at this company feel about that?

If Sam Altman, even just a few hours before, was saying, and anthropic is taking a stand here, we agree with it.

And then a few hours later, this is a, oh, we figured it out. It's all good.

Open AI employees didn't feel great about this.

I was speaking to sources at the company.

And there was a lot of frustration, not only with some frustration with just, you know, having this deal with the Pentagon,

but also how it all went down and how it seemed as though it was rushed through so quickly, you know, done in the late hours of Friday.

And then also what happened over the next few days was that it seemingly was getting updated.

So then Sam Altman does like a public ask me anything on X and people asking him questions.

And then come Monday.

Open AI says we've updated our contract, which we believe further locks down these red lines.

But the employees I spoke to said that they were just frustrated with how, how it looked with the narrative of how it felt so rushed out.

And that they were also frustrated that the topic was being hailed as this hero.

And they were being kind of portrayed as these villains.

If you go outside of Anthropics office, there are all these messages in chalk on the sidewalks, thinking them.

Open AI's office have some different messages in chalk.

Sam Altman ended up having an all hands meeting with all of the employees on Tuesday.

And he did acknowledge both in that meeting and in a memo to staff that the whole process looked opportunistic and sloppy.

And that he wished it hadn't been as rushed through as it was, but that he thought he was just trying to really de-escalate the situation.

And on Thursday, the Pentagon formally issued the supply chain risk designation to Anthropic.

But importantly, it was actually of a narrow scope that I think a lot of people feared.

Because there was a fear that if the supply chain risk designation was so broad, that could mean that almost anybody that Anthropic wanted to do business with couldn't work with them.

Because if they also wanted to be able to do work with the US military, which obviously has a lot of contractors out there that could impact Anthropics businesses.

And so there is a sense that this narrow designation is a bit of a step down than what had initially been threatened.

Open AI says their agreement with the Pentagon allows their systems to be used for all lawful purposes, consistent with applicable law, operational requirements, and well established safety and oversight protocols.

They say their agreement explicitly states that their tools will not be used to conduct domestic surveillance.

And they're sticking by their red line that its system will not be used to direct autonomous weapons.

That is all stuff Anthropic had insisted on in the first place.

So begs the question, why was the Pentagon willing to play ball with Open AI and not Anthropic?

I think this really comes down to an issue of like personalities and politics.

Anthropic has been labeled the woke company by the administration by David Sacks, who's the White House AIs are.

They are backing political groups that are trying to work for more AI regulation, which is not the White House's point of view.

The White House has been trying to prevent states from regulating AI on a state level.

And so there is a political background here.

Open AI's president, Greg Brockman, has donated millions of dollars to President Trump and his political groups.

And you know, Sam Altman has been seen as being closer to the administration meeting at the White House and things like that.

Yeah, I've seen him literally next to the president multiple times.

So there is a question of was this just an issue of personalities clashing?

Yeah, I guess someone does this moment say more about the Trump administration, namely the federal government effectively blacklisting a company that wouldn't bend the knee.

Or does it say more about where AI companies like Open AI are now and what they're willing to do to stay ahead of the curve?

I think this is much more about the Trump administration because as Axios has pointed out, the Trump administration is treating China's deep seek AI model better than a homegrown American company that is widely seen as having one of

if not the best AI models out there like fine, don't work with a company if you don't want to.

If you're the US government, you don't agree with them.

But the flip side of that and the irony is that we have reporting from the Washington Post and the Wall Street Journal and CBS that the military is literally using anthropics product as we speak in this operation in Iran.

And so I don't know how two things can be true. It can something be both so dangerous.

It has to be supply chain risk and yet then why is the military literally using it right now?

And a lot of people's answer to that is, well, this is just a punitive punishment.

How does this affect the average person who isn't like a defense contractor or working in the military?

Like, what does this mean just for the average person using these chatbots for X, Y and Z? Like, what should we make of all of this?

Well, for one thing, anthropics cloth has shot up in popularity for the everyday person.

I think a lot of people didn't know that what anthropic or cloth were.

They only knew of chatched BT, but it shot up to the top of the App Store.

But, you know, what systems our government relies on in their AI systems will matter because as we turn to AI models more and more for every sort of task, whatever rules and, you know, weights and measures and all these things that go into how an AI model is created, those will affect the outcome.

So if the government is using an AI system to determine who gets benefits or to determine how your taxes are run.

That will have an effect on you. Now, those things are, I would say, a few years out, but that is why people need to pay attention to these types of debates because down the line, they could affect us all.

It's important to say, anthropic has long pitched itself as an AI company with a soul. Safety was at the core of everything it did.

However, right in the middle of its beef with the Pentagon, the company said it would relax itself and pose guardrails because they were hindering its ability to scale and compete in a crowded market.

According to a source familiar with the matter, the policy change was separate and unrelated to the discussions with the Pentagon.

Meanwhile, we asked both the Pentagon and anthropic about whether cloth is being used in the war with Iran. They did not respond to our request for comment.

We also asked the Pentagon and the White House whether politics played a role in these negotiations with anthropic and did not get a response.

When we come back, I'm going to talk with someone who helped the Trump administration craft AI policy, but now says what the Pentagon did here is part of an ongoing death rattle. Stick around.

I'm Dr. Sanjay Gupta, host of the Chasing Life Podcast. Dr. Zachary Rubin, he's a pediatric allergist, clinical immunologist, and he just recently released his book called All About Allergies.

With food allergies, we know that that's been around for thousands of years, but just describe differently, right?

There's even ancient Chinese texts that talk about avoiding specific foods during pregnancy or different parts of life, so there's definitely evidence that it's been around,

and it's probably as a protective measure against toxins or parasites that were found in food and it became this more exaggerated response.

Listen to Chasing Life, streaming now, wherever you get your podcasts.

My principal role there was holding the pen, as you might say, on the AI action plan.

This is Dean Ball. He's currently a senior fellow at the Foundation for American Innovation.

But in April of 2025, he joined the White House Office of Science and Technology to help rate the Trump administration's AI action plan.

This was a consequential document released last year that outlines how the federal government views AI and how it should be used.

Dean helped write the first draft.

The people were reacting to a draft that was principally written by me.

Did you feel the final version did justice to what you had in mind?

Yes.

Well, so I wanted to talk to you today because you wrote a essay on your sub-stack on March 2nd called Claude, CLAWED, pretty clever.

And you framed this whole dust up in pretty dire terms. I want to quote you here.

You said, I could say to the events of the last week a kind of death rattle of the old republic, the outward expression of a body that is thrown in the towel.

I mean, that's pretty serious. Look, what did you mean by that?

What I mean by that is I'm not saying this is some watershed moment or this is like the cause of the death of the republic or something like that.

That's not my observation at all.

My observation instead is that like the death rattle is a very, very subtle in the grand scheme of things, a subtle indicator of a body that is in the process of dying.

And so what I mean by that is that a great deal of the people who have defended the administration here have basically done so on the grounds that like, well, what else did you expect?

Of course, their private property won't be maintained. Like, they're making this powerful technology and their political enemies in the administration.

Like, what else did you expect? And it's like, well, wait, like, are we just saying that you're allowed to be a company that has political ideas that are different from the people in power?

Have we just given up on the idea of the First Amendment there?

And that's the thing that I think really goes a bridge too far is this idea of, you know, if you don't do business with us on our terms, we're going to destroy your company.

That can't possibly be the way our country works, right?

Because that would be an aberration of private property rights. Because like, what is to stop the government from saying, you know, going to any random business in the economy and saying, yeah, we want to procure your services and we want you to do it on these terms and on another level.

If in fact, I think a lot of people look at the fact patterns here and they come to the conclusion that instead of this being a principled matter about usage restrictions and DOD contracts, that this is instead quite frankly about politics and they don't like anthropic politics.

They don't like the fact that anthropic has, you know, leaders who have given money to the Democratic Party.

They don't like the fact that they hired former officials of the Biden administration.

And if it's a political thing, well, then we're in First Amendment territory, right? And it's like, well, it can't be the case that you get retaliated against for your political views, right?

And to the extent that's happened before it obviously has happened.

I was going to say you can't honestly be surprised, right? Just based on how this administration has kind of gone after political enemies in various other ways.

Prior administrations and prior administrations too. This is my point. This is why I wrote the article. I'm not saying that this is some watershed moment. Maybe it's worse than what we've seen in the past.

But ultimately, it's just a continuation of these same trends, which have all been gradual diminishing of these basic bedrock principles of our republic.

And that's why I think it's a slow death.

Well, on the actual points that anthropic was taking issue with their red lines. Did any of them sound unreasonable to you?

I mean, no, and they didn't sound unreasonable to the Trump Department of Defense, you know, eight months ago.

Never, right? Like so. No, autonomously, the weapons and the mass domestic surveillance both seem like pretty reasonable red lines.

I would say like, you know, the government having access to advanced artificial intelligence to do with what it wants.

Even within the domain of purely lawful use, there are potential civil liberties and other types of concerns that I think all Americans should have about that regardless of political stripe.

And I hope that Congress can pass some restrictions on such usage that are real.

And then beyond that, like, I think the idea that not only does US government use of these models, like under any circumstances, you should shouldn't raise legitimate civil liberties concerns.

And has it raised, you know, it's been a concern of mine for years, right?

It's one of the animating things that got me into this field is, you know, someone on the center right because I'm concerned about the prospect of government abuse of power using this technology.

And the fact that on top of those existing concerns, the government is now asserting that no one can tell them, you know, there are no restrictions on their use that can be imposed in contract.

It's certainly caused to be to be concerned for sure.

Yeah, I mean, it's that disheartening for you at all to have done this work for the administration and then to see this as a result.

Like, was that ever a possibility in your mind?

Yes, this was always a possibility in my mind.

And the reason has nothing to do with the Trump administration and it has everything to do with the structures of power.

It's obvious that AI is going to be extremely powerful. It's obvious that in some ways they will challenge the traditional power structures in ways that implicate politics and the state.

And so it was always very clear to me that the birthing of machine intelligence was going to happen in conflict with the government and would be profoundly political.

So I knew that everything up to and including government seizure of the national labs was a distinct possibility.

I expected it would take a little bit longer. I expected this, but I'm not shocked that it's happening here because again, like, you can talk a big game about deregulation and wanting to let the innovators innovate all you want.

But the reality is that the government has the incentives that it has and those cut across party lines.

So, like, is it disheartening to me to see this happen?

Look, yeah, if they're successful, then everything we did on the action plan and all the other stuff that this administration has tried to do to let AI thrive, it all goes in the toilet.

None of it matters.

At the same time, I think they probably fail most likely.

And the work on the action plan continues to pace and there are many dedicated people in the government that are working on it.

You know, I applaud their work. I continue to cheerlead them. I'll continue to speak positively about them. I'm not declaring war on the administration in any way. I'm saying this is a bad idea.

Well, Dean, thanks very much for your time. I really appreciate it.

Of course. Thank you so much.

That's all for us today. Thank you as always for listening. We really appreciate it. If you have five seconds, maybe ten seconds.

Leave a rating in a review wherever you listen and help other people find the show. We'll be back here again on Wednesday. I'll talk to you then.

I'm CNN Tech reporter Claire Duffy. This week on the podcast, Terms of Service, there's a growing category of products aimed specifically at addressing women's unique health needs.

These tools and services are sometimes known as FEM Tech and they can provide big opportunities and benefits, but they can also come with some risks to walk us through all of this.

I spoke with Bethany Corbin. Bethany is an attorney and CEO of FEM Innovation where she advises startups, clinicians and healthcare organizations.

In my opinion, what it really does is gives us a collective language to talk about women's healthcare innovation and the tools that are out there so that we can take control of our healthcare experiences and know how to advocate for ourselves in a system that's probably not been designed to advocate for us.

Listen to CNN's Terms of Service wherever you get your podcasts.